SparseWorld: Real-World Cities in Minecraft

As the computing power of personal computers rapidly expands, games have increasing resources available. These resources have largely been used to improve the graphical realism and fidelity of the games, as well as to drive improvements in “artificial intelligence” for non-player characters. Computers have the memory and CPU horsepower to drive more expansive, immersive worlds, but the human effort necessary to build game worlds has only grown as graphical realism increases. Although some procedurally-generated worlds are used in games like Minecraft, such worlds are valued mostly as a “blank canvas” for sandbox-type games. We believe that data exists to machine-generate accurate game worlds from real-world locations. SparseWorld is a proof-of-concept project to harness distributed computing and large datasets to build expansive, recognizable game worlds with minimal human effort.

Built from 2013 to 2014, and covered by Ars Technica in 2014, the SparseWorld project originally built extremely simplified real-world environments for Minecraft. It became the inspiration behind Geopipe, the deep-tech startup I founded in 2016.

How It Worked

SparseWorld draws on a combination of datasets to build worlds. It uses proof-of-concept conversion scripts written in Python to combine these datasets to first generate realistic terrain, then convert buildings and structures and place them onto the terrain:

- Elevation: USGS EROS service

- Landcover: USGS EROS service

- Orthoimagery (satellite imagery): USGS EROS service

- Building models: (planned) Google Earth

- Street correction: (planned) OpenStreetMap (OSM)

SparseWorld requires several steps to convert a region of the real world into a Minecraft world, some of which can be parallelized, and some of which have unavoidable dependencies:

- Determine what areas of real-world terrain data need to be fetched, download the relevant elevation, landcover, and orthoimagery data, and stitch together pieces as necessary (GetRegion phase).

- Warp and combine data into one large 8-layer GeoTIFF image. Layers are elevation, landcover, core depth, bathyspheric depth, terrain red channel, terrain green channel, terrain blue channel, and terrain IR channel (PrepRegion phase)

- Generate Minecraft tiles (16 meter x 16 meter vertical slices of the terrain) from the terrain data (first half of BuildRegion phase). This phase is well-suited to parallelization.

- Weld tiles into regions, 512 meter x 512 meter vertical slices of the terrain, each of which is stored in a single file (second half of BuildRegion phase). This phase is reasonably well-suited to parallelization.

- (Planned) Generate 2D splines from OpenStreetMap data, correct shadows and overlaps over streets in orthoimagery.

- Generate voxelized building, structure, and tree models from Collada 3D models, then place onto terrain. This phase can be parallelized with a pool of converter workers, a crossbar, and a pool of terrain region workers.

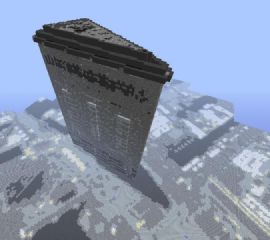

Results and Screenshots

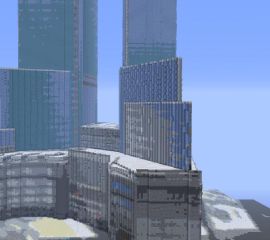

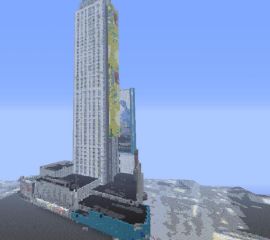

2014 rendering of 1:1 terrain model of Manhattan, complete with elevation, landcover, and orthoimagery data, plus a few building models from Google’s 3D Warehouse. A real-time interactive server map was also available in 2014.

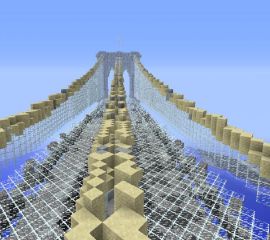

Sample screenshots from real-world models automatically converted into Minecraft buildings by SparseWorld, including Yankee Stadium, the Time Warner Center, the Chrysler Building, the Brooklyn Bridge, the main branch of the New York Public Library, the New York Life Building, the Flatiron Building, Madison Square Garden, and part of Times Square. All of the buildings are placed in their real-world location within the map above.