Master's Thesis: Scouter

Author’s Note: In 2009-2010, when this project was first conceived and executed, machine learning inference on mobile hardware and augmented reality were both fledgling fields. While today this project would be trivial with off-the-shelf VR hardware and a smartphone plus easy-to-use ML frameworks, in 2010 none of those resources existed. To the author’s knowledge, this was one of the first demonstrations of augmented reality that performed near-realtime analysis of the user’s view.

Facial detection and recognition are among the most heavily researched fields of computer vision and image processing. However, the computation necessary for most facial processing tasks has historically made it unfit for real-time applications. The constant pace of technological progress has made current computers powerful enough to perform near-real-time image processing and light enough to be carried as wearable computing systems. Facial detection within an augmented reality framework has myriad applications, including potential uses for law enforcement, medical personnel, and patients with post-traumatic or degenerative memory loss or visual impairments. Although the hardware is now available, few portable or wearable computing systems exist that can localize and identify individuals for real-time or near-real-time augmented reality.

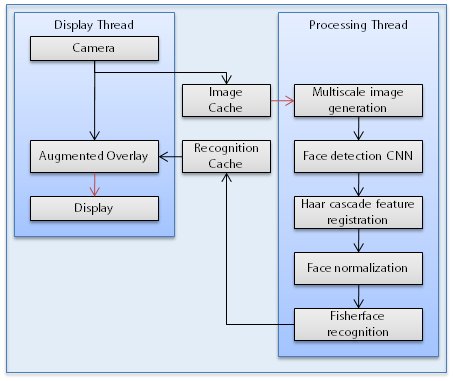

The author presents a system design and implementation that performs robust facial detection and recognition robust to variations in lighting, pose, and scale. Scouter combines a commodity netbook computer, a high-resolution webcam, and display glasses into a light and powerful wearable computing system platform for real-time augmented reality and near-real-time facial processing. A convolutional neural network performs precise facial localization, a Haar cascade object detector is used for facial feature registration, and a Fisherface implementation recognizes size-normalized faces. A novel multiscale voting and overlap removal algorithm is presented to boost face localization accuracy; a failure-resilient normalization method is detailed that can perform rotation and scale normalization on faces with occluded or undetectable facial features. The development, implementation, and positive performance results of this system are discussed at length.

- Full Thesis: Mitchell, Christopher. “Applications of Convolutional Neural Networks to Facial Detection and Recognition in Wearable Computing and Augmented Reality.” The Cooper Union for the Advancement of Science and Art, 2010. (3.1MB, PDF)

- Technical Poster: Technical Poster (4.2MB, PDF)

eeePC901 used for computing

eeePC901 used for computing

eeePC901 used for computing

eeePC901 used for computing

Vuzix VR920 heads-up display with camera

Vuzix VR920 heads-up display with camera

Vuzix VR920 heads-up display with camera

Vuzix VR920 heads-up display with camera

Full hardware platform without human

Full hardware platform without human

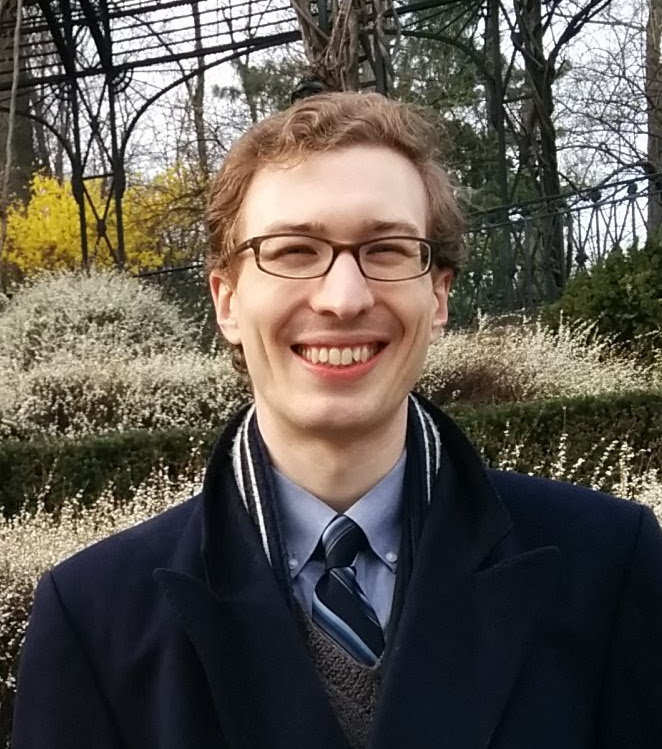

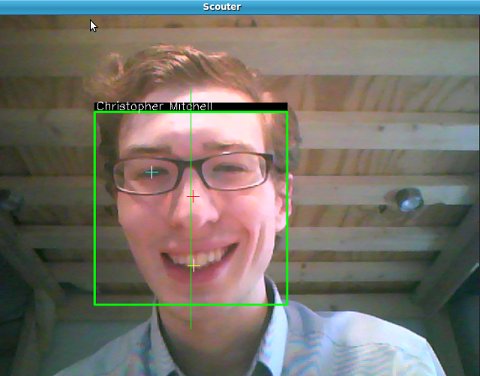

Realtime sample view inside HUD

Realtime sample view inside HUD

Planned final interface

Planned final interface

Full platform view with human

Full platform view with human

View of HUD and camera with human

View of HUD and camera with human

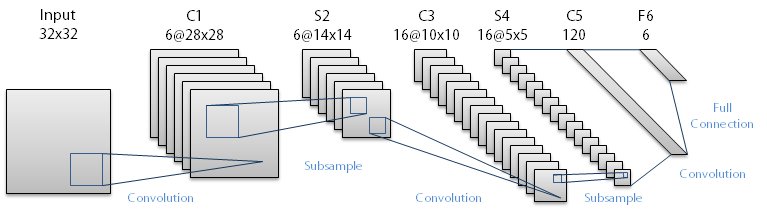

CNN architecture

CNN architecture

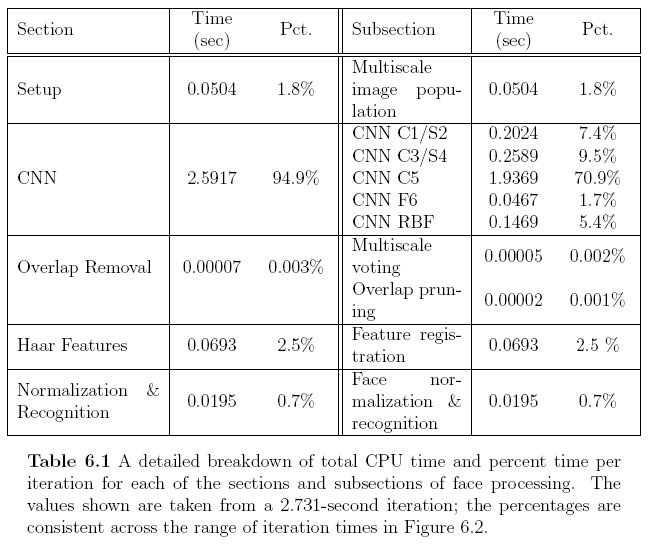

CPU usage

CPU usage

Scouter flowchart

Scouter flowchart